ChatGPT can generate 200 lines of MQL5 in 30 seconds. It will compile on the first try, produce a backtest with a respectable equity curve, and handle the basic strategy logic exactly as described. Then it will lose money in live trading — not because the strategy is wrong, but because the code is missing three things that only matter when real money is on the line: state persistence across terminal restarts, order context validation under live pool changes, and broker-specific constraint enforcement that the Strategy Tester never checks.

I have written before about how AI fits into professional EA development — as a productivity multiplier, not a replacement for domain expertise. This article goes deeper into the specific code-level failures. I review AI-generated MQL code regularly in code rescue projects at barmenteros FX. Across dozens of these reviews since 2024, the same three failure patterns appear in the majority of AI-generated EAs. The code is never wrong syntactically — it is wrong architecturally. And the dangerous part is that nothing in the standard testing workflow catches it.

It Compiles. It Backtests. It Loses Money.

Here is the typical sequence. A trader pastes a strategy description into ChatGPT: “Build an EA that trades EURUSD on H1 using RSI divergence with a 50-pip stop loss and 2:1 risk-reward.” The AI returns 200 lines of clean MQL5. The trader opens MetaEditor, compiles — zero errors, zero warnings. They run a backtest over two years of data and see a smooth equity curve with a 1.8 profit factor.

Confidence is high. They attach it to a demo account. It works for a few days. They move it to live with a small lot size. Three weeks in, after a VPS restart over the weekend, the EA opens three duplicate positions on Monday morning. The account is down 15% before the trader wakes up.

The code compiled. The backtest passed. The strategy was fine. What failed was everything between “this code runs” and “this code survives production.” Compilation proves syntax. A backtest proves the strategy logic handles historical data in a controlled, sequential environment. Neither one proves the code can handle a terminal restart, a broker rejecting an order modification, or two ticks arriving before the first order fills.

These are not edge cases. They are the standard operating conditions of live MetaTrader trading. And AI-generated code misses them consistently because the patterns that handle them are underrepresented in training data — nobody posts production resilience code to Stack Overflow.

Failure Pattern 1: No State Persistence — The Terminal Restart Problem

Every MetaTrader terminal restarts. VPS reboots happen. Brokers push updates. The terminal crashes during a volatility spike. Weekend restarts are routine. When that restart happens, every variable in the EA’s memory resets to its initial value.

AI-generated EAs store trade state in RAM — local variables, static variables, global scope counters. A common AI pattern looks like this:

static int openTradeCount = 0;

void OnTick()

{

if(openTradeCount < 1 && entryCondition)

{

OrderSend(...);

openTradeCount++;

}

}In the Strategy Tester, this works perfectly. The tester never restarts mid-run. `openTradeCount` increments when a trade opens, the check prevents duplicates, and the backtest looks clean.

In live trading, after a VPS migration on a Tuesday night, the terminal restarts. `OnInit()` fires. `openTradeCount` resets to 0. The EA scans the market, sees its entry condition is still valid, and opens a new position. The previous position is still running. The EA now has two positions where it intended one. If the terminal restarts twice more over a volatile week, the account has four times the intended exposure.

I see this pattern in roughly 40% of AI-generated EA rescue projects. The fix is not a patch — it requires architectural changes. On every `OnInit()`, the EA must scan `OrdersTotal()` (MT4) or `PositionsTotal()` (MT5), map existing tickets by magic number and symbol, and reconstruct its internal state from the live order pool. The counter is never the source of truth — the broker’s order pool is.

int OnInit()

{

openTradeCount = CountMyOpenPositions(magicNumber, Symbol());

printf("%s: Init | Recovered %d open positions", __FUNCTION__, openTradeCount);

return(INIT_SUCCEEDED);

}This is not complex code. But AI never generates it because the failure it prevents — a terminal restart with open positions — does not exist in the training environment.

Failure Pattern 2: Missing Order Context Validation

AI-generated MQL code treats the order pool as a static list. It selects an order, reads its properties, and acts on them — assuming the pool stays frozen between operations. In the Strategy Tester, it does. In live trading, it does not.

A typical AI pattern for trailing a stop loss on open positions:

for(int i = OrdersTotal() - 1; i >= 0; i--)

{

if(OrderSelect(i, SELECT_BY_POS))

{

int ticket = OrderTicket();

double currentSL = OrderStopLoss();

// ... 15 lines of trailing logic ...

OrderModify(ticket, OrderOpenPrice(), newSL, OrderTakeProfit(), 0);

}

}Two things can go wrong between the `OrderSelect()` at the top and the `OrderModify()` 15 lines later. First, the order closes — a take profit hits, another EA closes the position, or the broker executes a stop out. The stored ticket now points to a closed order, and `OrderModify()` fails. Second, and more subtle: if the trailing logic between select and modify contains any intervening `OrderSelect()` call — scanning for a related order, checking a hedge position — the internal selection shifts. The inline `OrderOpenPrice()` and `OrderTakeProfit()` at the modify line then return values from whatever order was last selected, not the order at the stored ticket. The modify targets the right order but sends it wrong parameters — a take profit from a different position, or an open price from a pending order that has nothing to do with the trade being trailed.

In the Strategy Tester, ticks process sequentially within a single EA context. Orders do not close between operations within the same tick, and the trailing logic runs without interruption. The vulnerable pattern above runs flawlessly across five years of backtest data.

The production-ready version re-selects by ticket before any modification:

for(int i = OrdersTotal() - 1; i >= 0; i--)

{

if(OrderSelect(i, SELECT_BY_POS))

{

int ticket = OrderTicket();

// ... trailing logic calculates newSL ...

// Re-validate before modifying

if(OrderSelect(ticket, SELECT_BY_TICKET))

{

if(OrderCloseTime() == 0) // Still open

{

OrderModify(ticket, OrderOpenPrice(), newSL, OrderTakeProfit(), 0);

}

}

}

}The difference is three lines and one concept: the order pool is live, and any operation that changes it — including your own `OrderSend()` or `OrderClose()` — invalidates previous selections. AI does not model this because sequential backtest execution never exposes it.

Failure Pattern 3: Broker Constraint Blindness

AI generates order operations that ignore broker-specific constraints. These constraints are not part of the MQL language specification — they are runtime conditions enforced by the broker’s trade server. AI has no way to learn them from code samples alone, and the Strategy Tester does not enforce most of them with default settings.

Three sub-patterns I find in over 40% of AI-generated EA reviews across the past two years:

Stop level violation. Every broker defines a minimum distance between the current price and any stop loss or take profit — `MODE_STOPLEVEL` in MT4, `SYMBOL_TRADE_STOPS_LEVEL` in MT5. AI generates `OrderModify()` calls without checking this distance. The broker either rejects the call silently (the EA logs no error because it does not check the return value) or returns error 130 (invalid stops). The trailing stop logic the trader paid to have never actually trails.

Lot step mismatch. Brokers define valid lot sizes in steps — 0.01, 0.10, or sometimes 0.001. AI calculates lot size as `AccountBalance() * riskPercent / (stopLoss * tickValue)` and sends the raw double to `OrderSend()`. The result might be 0.0847 lots. The broker rounds this to the nearest valid step. The trader’s actual risk is different from the intended risk, and the EA never knows because the broker does not return an error — it silently adjusts.

Trade context busy. In MT4, only one trade operation can execute at a time per terminal. If another EA or a manual action is in progress, `IsTradeAllowed()` returns false. AI never generates this check. The EA fires `OrderSend()` during a busy context, gets error 146, and — because AI also rarely generates error handling — proceeds as if the order was never attempted. The entry signal passes, the order never opens, and the EA has no record of the failure.

A composite example of what AI produces vs. what survives production:

// AI-generated — compiles clean, fails live

double lots = AccountBalance() * 0.02 / (stopLossPips * tickValue);

OrderSend(Symbol(), OP_BUY, lots, Ask, 3, Ask - stopLossPips * Point, Ask + tpPips * Point);// Production-ready

double rawLots = AccountBalance() * 0.02 / (stopLossPips * tickValue);

double lots = MathFloor(rawLots / lotStep) * lotStep;

lots = MathMax(lots, minLot);

lots = MathMin(lots, maxLot);

double sl = Ask - stopLossPips * Point;

double stopsLevel = MarketInfo(Symbol(), MODE_STOPLEVEL) * Point;

if(Ask - sl < stopsLevel)

{

sl = Ask - stopsLevel;

}

if(!IsTradeAllowed())

{

printf("%s: Trade context busy, skipping entry", __FUNCTION__);

return;

}

int ticket = OrderSend(Symbol(), OP_BUY, lots, Ask, 3, sl, Ask + tpPips * Point);

if(ticket < 0)

{

printf("%s: OrderSend failed | Error=%d", __FUNCTION__, GetLastError());

}The difference is not cleverness. It is awareness of what the broker will do to the order after the EA sends it.

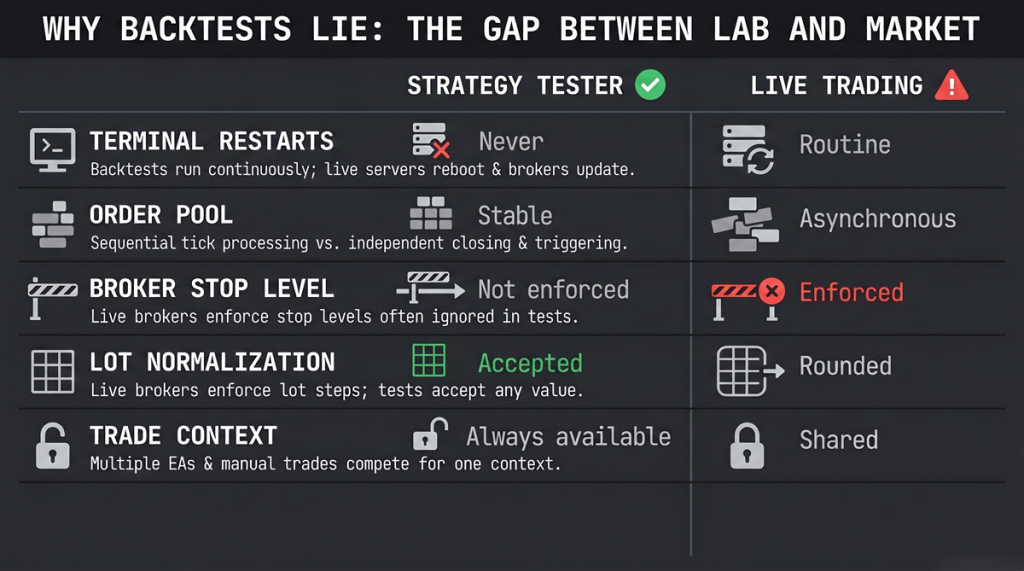

Why Strategy Tester Backtesting Doesn’t Catch Any of This

The Strategy Tester is designed to validate trading logic against historical price data. It is not a production environment simulator. Understanding the specific differences explains why AI-generated code passes backtest and fails live every time:

| Condition | Strategy Tester | Live Trading |

|---|---|---|

| Terminal restarts | Never happens during a test run | Routine — VPS reboots, crashes, updates |

| Order pool changes | Sequential tick processing, pool is stable within a tick | Asynchronous — positions close, pending orders trigger, other EAs act |

| Broker stop level | Often not enforced or set to 0 | Enforced — violations rejected or silently adjusted |

| Lot normalization | Accepted as-is in most cases | Broker rounds to nearest lot step |

| Trade context | Single EA, always available | Shared — other EAs, manual trades, scripts compete for context |

| Spread behavior | Fixed or modeled from historical data | Dynamic — widens during news, session gaps, low liquidity |

AI learns from code that works in the left column. It has no training signal from the right column because production failures are rarely posted as code samples — they are posted as forum complaints with no source code attached. This is the same reason more backtesting does not make your EA better — the tester validates a narrower reality than the one your EA will face.

This creates a systematic blind spot. The AI produces code optimized for the testing environment because that is the environment represented in its training data. The code is not broken — it is incomplete. It handles the problem the developer described and nothing else.

What to Check Before Running AI-Generated MQL Code Live

Before attaching any AI-generated EA to a live or demo account, run these six checks. Open the source file in MetaEditor and search for each pattern. If any check fails, the code is not production-ready.

- State persistence mechanism. Search for `GlobalVariableSet`, `GlobalVariableGet`, `FileWrite`, `FileRead`, or database operations in `OnInit()`. If the EA tracks any state (trade count, basket data, entry prices) and does not persist it to storage, it will lose that state on every restart.

- Position reconciliation on init. Look at the `OnInit()` function. Does it scan `OrdersTotal()` or `PositionsTotal()` and rebuild internal state from the live order pool? If `OnInit()` only sets defaults and initializes indicators, the EA assumes a clean slate on every restart — even when positions are open.

- Order re-selection before modification. Find every `OrderModify()` call. Trace backwards — is there an `OrderSelect(ticket, SELECT_BY_TICKET)` immediately before it? If the last `OrderSelect()` was by position index (`SELECT_BY_POS`) more than a few lines earlier, the EA is operating on potentially stale data.

- Stop level validation. Search for `MODE_STOPLEVEL` (MT4) or `SYMBOL_TRADE_STOPS_LEVEL` (MT5). If neither appears anywhere in the code, every `OrderModify()` and `OrderSend()` with a stop loss is vulnerable to broker rejection.

- Lot normalization. Search for `MODE_LOTSTEP` or `SYMBOL_VOLUME_STEP`. If lot size is calculated without rounding to the broker’s lot step, the actual position size will differ from the intended size.

- Trade context guard. Search for `IsTradeAllowed()`. If it does not appear before `OrderSend()` or `OrderModify()`, the EA will fail silently when the trade context is busy — and it will not retry or log the failure.

This checklist covers the three most common failure categories. It does not cover strategy-specific edge cases — spread handling during news, partial fill management, or multi-symbol coordination issues that depend on the specific broker and EA architecture. A professional code review catches the patterns specific to your strategy and broker configuration that a general checklist cannot anticipate.

Leave a Reply